TikTok & Instagram’s Attempt to Curb Misinformation

Misinformation on social media is something we all come across, whether we realize it or not. Platforms like TikTok and Instagram both claim they are working to reduce it, but after looking at what they say they do versus what I actually experience using the apps, both platforms are doing just enough to say they tried. One thing that stood out to me while researching this is that even when there are updates in 2025 going into 2026, most of them are just extensions of systems that were introduced in 2023 and 2024. That matters because misinformation and AI content have become way more advanced, but the solutions are only updated versions of older ideas that aren’t working well instead of completely new approaches.

TikTok

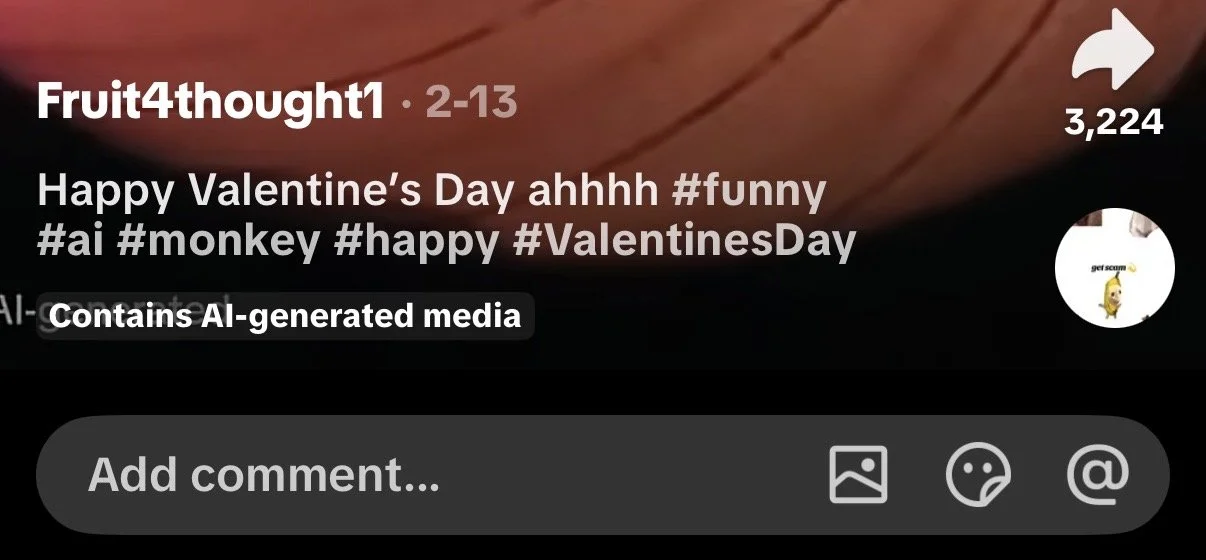

TikTok’s current approach focuses on labeling AI-generated content, removing harmful misinformation, and allowing users to report misleading posts. The platform says creators should label realistic AI-generated content and that it can automatically label some posts using metadata systems (About AI-generated content). On paper, that sounds like a strong system, but the way it actually works is problematic. TikTok relies heavily on creators to label their own content, which defeats the purpose. If someone is intentionally creating misleading or attention grabbing content with the use of AI, why would they choose to label it as AI? That makes the system feel more like an option than a requirement, even if the policy says otherwise.

There have also been instances where content is incorrectly labeled as AI when it is not, showing that even when the system is used, it is not always accurate (Why is this happening and is it happening to anyone else | ai videos | TikTok). When I first noticed the labeling feature, it actually intrigued me because it seemed like something viewers should be aware of. But that did not last long. I quickly noticed how inconsistent it was. I come across clearly AI-generated content every couple of scrolls and there is almost never a label on it. After seeing that pattern over and over, I think I stopped even looking for it, and that alone made me feel untrusting of the platform. That inconsistency completely weakens the entire system. Overall, TikTok’s system ends up placing more responsibility on users to figure out what is real instead of actually controlling the problem itself. Personally, I do not use TikTok as a news source, and when I do engage with informational content, it is from reputable news sources.

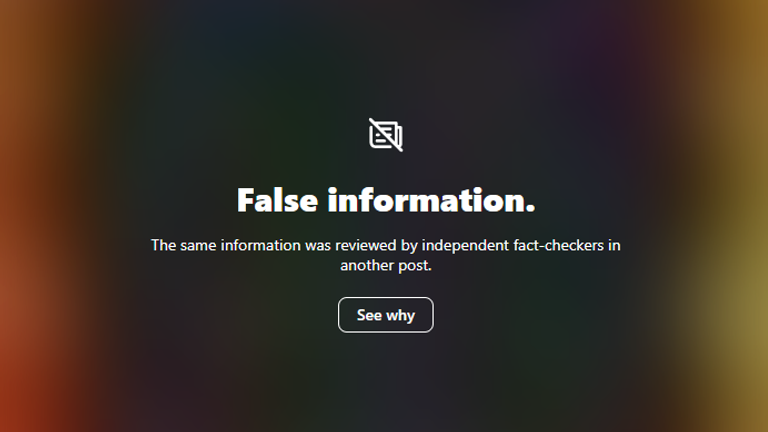

Instagram’s approach has shifted recently, but what stands out to me is that I have not really experienced this new system. Instagram initially relied on third-party fact-checking but has now moved toward a Community Notes-style system where users add context to posts (More Speech and Fewer Mistakes). This is supposed to make moderation more open and less controlled by the platform itself. But from my perspective, I don’t see it. Even the older warning labels, I do not come across that often, but that may be because of the type of content on my feed.

I also do not naturally engage with a lot of political content unless something major is happening, which could explain why I see it less. Still, that raises another issue. If these systems are not consistently visible across different types of feeds, then they are not being experienced equally by all users. And even when I do see warning labels, I still click on the content, not because I don’t trust it but because I am curious about what misinformation is being pushed. So even when the system is present, it does not actually stop engagement considering that a major cause for the spread of misinformation is people simply finding something entertaining.

The new Community Notes approach also raises a concern for me. If this system is based on “the community” deciding what is misleading, who is to say that the community itself is informed or unbiased? People who contribute to these notes could also be misinformed or intentionally spreading their own narratives. That makes the system feel less reliable because it depends on the same environment where misinformation already spreads. Research has raised concerns about whether community based moderation systems can effectively address misinformation at scale (Ask the expert: What Meta’s new fact-checking policies mean for misinformation and hate speech | MSUToday). Instead of strengthening their approach, it feels like Instagram shifted toward something that looks more open but may actually be less controlled. I also think it just puts less work on the platform.

Conclusion

Looking at both platforms together, I do not think their policies fully work. TikTok’s system lacks consistency, and Instagram’s system raises questions about credibility and reliability. I think the bigger issue is how much these platforms rely on users instead of taking stronger responsibility themselves. Both systems expect users to question, interpret, and verify content, which is not realistic for everyone. Another issue is transparency. These platforms are quick to announce new features, but when it comes to misinformation policies, everything feels quiet. That makes it feel performative, and like they are doing just enough to say they addressed the problem without actually taking full accountability.

What is missing from both platforms is consistency, accountability, and stronger control over how misinformation is handled. TikTok needs to enforce AI labeling in a way that actually feels required, not optional, and ensure that clearly AI-generated content does not go unmarked. Instagram should not rely entirely on community-based moderation. There should still be expert involvement, especially for serious topics like politics and public health. A system that combines expert fact-checking with community input would be much more reliable than leaving it up to users alone. I think these changes would actually improve how misinformation is handled because they directly address trust and responsibility rather than just visibility.

At the end of the day, both TikTok and Instagram are aware of misinformation, but their current efforts do not match how serious the issue has become. I still feel like I have to do most of the work myself when deciding what to trust, and if that is the case, then the platforms are not doing enough.